Smart Work Zone Control and Performance Evaluation Based on Trajectory Data - Thermal UAV Video Data

Project Timeline: 2022 - 2024

Links

Z. Bhuyan, L. Wu, Y. Xie, M. Shirazi, Y. Cao and B. Liu, "Analyzing Highway Work Zone Traffic Dynamics via Drone Thermal Videos and Deep Learning," 2024 IEEE 27th International Conference on Intelligent Transportation Systems (ITSC).

Thermal imaging analysis solution aimed at vehicle tracking in drone-captured videos, incorporating oriented bounding boxes and SAHI for superior detection capabilities.

Project Details

- Thermal UAV video analysis

- Vehicle tracking and trajectory analysis

- Oriented bounding box detection

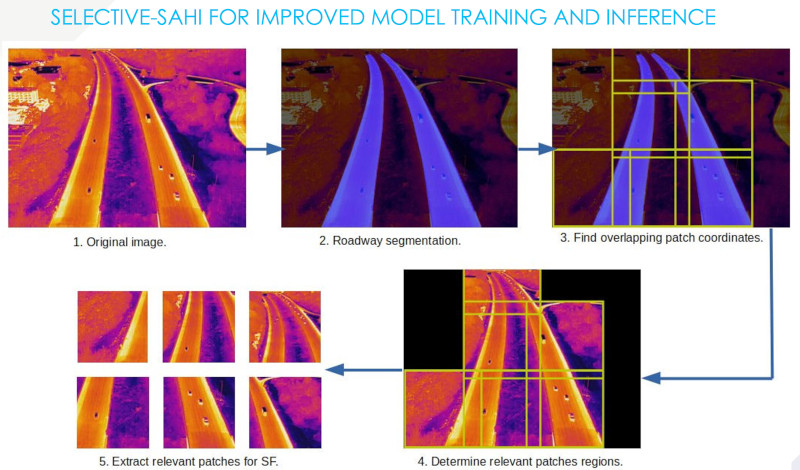

- SAHI (Slicing Aided Hyper Inference) integration

- Work zone performance evaluation

|

|

| Medford, MA: I-93, near Exit 21. (https://maps.app.goo.gl/muLRTy4BFLoJyUoj7) | Danvers, MA: I-93, near Exit 10. (https://maps.app.goo.gl/j4ysifxbs8VmehxP6) |

Deep Learning vs Traditional Computer Vision

Comparing deep learning-based methods with traditional computer vision tracking.

Deep learning models offer superior accuracy and robustness in detecting and tracking vehicles but require significant computational resources and training data. On the other hand, traditional computer vision methods are less resource-intensive and can be quickly implemented but might lack the precision and adaptability of deep learning techniques, especially in complex or dynamic scenes.

A notable challenge in processing drone video data arises during nighttime conditions, where low light and glare from headlights can significantly degrade video quality. Basic computer vision tracking methods have shown promise in analyzing thermal drone footage, especially due to the distinct features observable in top-down views. Techniques such as background subtraction, contour detection, and the use of more traditional tracking methods such as Kernelized Correlation Filter tracker, leverage the simplicity and high contrast of vehicles against the road surface. These methods are particularly effective in scenarios where deep learning models might be computationally intensive or require extensive training data. This approach, while beneficial in terms of computational efficiency and effectiveness for certain scenarios, comes with the caveat that parameter adjustments are necessary for each new location; at times, even drone movement could necessitate recalibrations, adding a layer of complexity to their application.

|

|

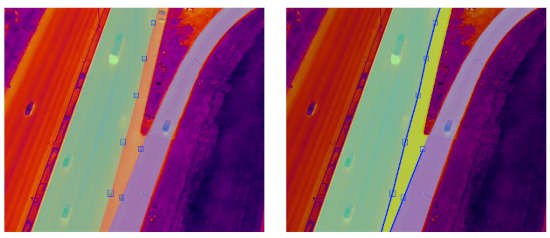

| Medford, MA: Heatmap, OBB + tracking. | Danvers, MA: Heatmap, OBB + Tracking. |

Thermal vs RGB Imaging

Thermal imaging allows drones to capture images in complete darkness or through obstacles like smoke, providing a significant utility. A comparison of nighttime images taken simultaneously with regular RGB and thermal cameras from a drone illustrates this point. In the RGB images, vehicles are barely visible. However, in thermal images, objects display distinct shapes and unique thermal signatures. This difference presents a challenge, especially when objects appear small in drone-captured images, making detection and classification difficult.

In our analysis based on drone videos at the Medford location, we closely monitored 140 vehicles as they traversed over the rumble strips. Our findings revealed that only 40 of these vehicles exhibited a speed reduction greater than 15% upon encountering the rumble strips. This indicates that the presence of rumble strips, while effective for some vehicles, does not universally lead to significant speed adjustments. Furthermore, within the context of lane changes, particularly in the second lane from the left—which also leads directly to a downstream exit ramp—only 22 vehicles (or approximately 15%) engaged in immediate lane changes within a 160-foot distance following the rumble strips. This observation underscores the complexity of interpreting vehicle behavior, as not changing lanes could also align with the intention to exit, rather than not being influenced by the rumble strips.

At the Danvers location, the analysis of trajectories for 100 vehicles revealed a consistent pattern, where a similar proportion (15%) of drivers executed immediate lane changes upon encountering rumble strips. This consistency across both locations suggests a broader trend where the presence of rumble strips influences a subset of drivers to adjust their speed or change lanes, albeit a relatively small fraction.